Today, data is everywhere, and companies need faster and smarter ways to analyze it. Serverless Data Analytics helps organizations process, analyze, and visualize data without managing servers. Using tools like Azure Functions and Azure Databricks, teams can build automated, scalable, and cost‑effective data analytics solutions for real‑world business needs.

Every time someone buys something online, opens an app, or clicks a button, data is created. But raw data alone is useless. Companies need people who can clean it, process it, and turn it into meaningful insights.

This is where Serverless Data Analytics using Azure Functions and Databricks comes in.

Don’t worry if this sounds complicated right now. By the end of this blog, it will feel simple and logical.

What Does “Serverless” Really Mean?

Let’s keep this very simple.

Serverless does NOT mean there are no servers. It means you don’t manage them.

Think about using WhatsApp:

You send a message

You don’t care where the server is

It just works

Serverless works the same way.

You write code. Azure runs it. Azure handles everything else.

No setup headache No server maintenance Pay only when it runs

Why Azure Serverless Analytics Is Important for Your Career

Companies today don’t want slow systems or manual work.

They want:

Automated data processing

Scalable systems

Cloud‑based solutions

If you know how to build serverless analytics pipelines, you already stand out as: Data Analyst Data Engineer Cloud Data Engineer

This is why ConsoleFlare teaches these concepts in a practical, job‑focused way.

Let’s Understand the Tools

Azure Functions - The Trigger Button

Azure Functions is like a switch.

When something happens:

A file is uploaded

A time is reached (daily, hourly)

An API is called

Azure Function runs your code automatically.

You don’t start it. You don’t stop it. Azure does everything.

Azure Databricks - The Heavy Worker

Azure Databricks is where real data work happens.

It is used to:

Handle big data

Clean messy data

Transform raw data into useful data

It uses PySpark, which is perfect for large datasets.

If Excel feels slow, Databricks feels like a rocket

Azure Storage -The Store Room

This is where data lives.

Raw data comes here

Processed data goes here

Power BI - The Showroom

Power BI is where data becomes charts, reports, and dashboards.

Managers don’t want tables. They want visuals.

Power BI makes that easy.

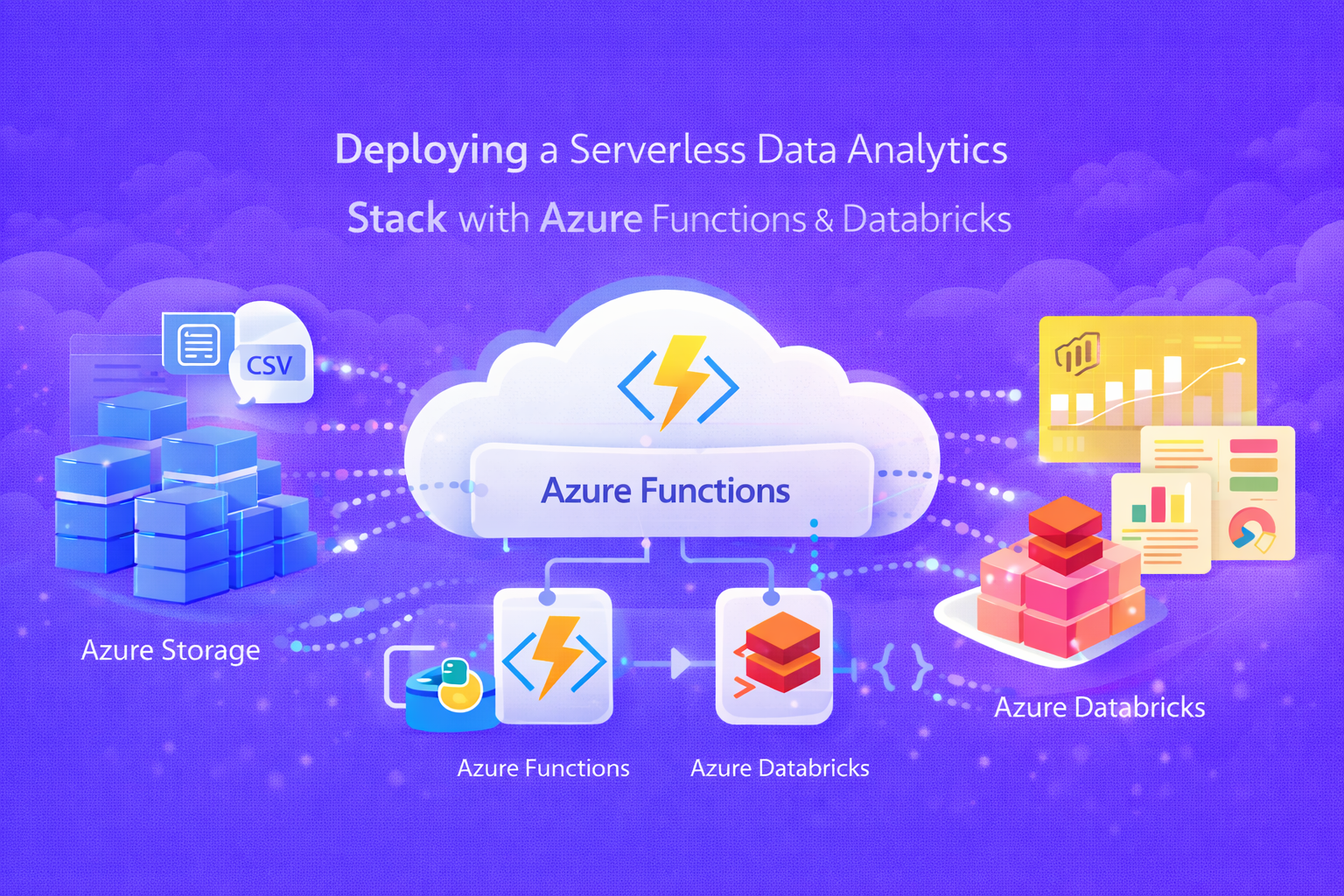

How Azure Serverless Analytics Works Together

Let’s connect all the dots.

Data comes into Azure Storage Azure Function gets triggered It tells Databricks to start work Databricks processes data Clean data is saved Power BI shows an updated dashboard

That’s it. This is a real‑world industry workflow.

Let’s Build It Step by Step

No rushing. Let’s go slowly.

Step 1: Set Up Azure

Create:

Azure account

Resource group

Databricks workspace

This builds your cloud foundation.

Step 2: Create Databricks Cluster

Think of a cluster as:

A machine that does heavy data work for you.Create a cluster. Attach notebook. You’re ready.

Step 3: Process Data Using PySpark

Let’s take a small example.

Sample Sales Data

csv

order_id,customer,region,amount,order_date

101,Amit,North,1200,2024-01-05

102,Neha,South,,2024-01-06

103,Rahul,East,850,2024-01-07

104,Pooja,West,1500,2024-01-08Start Spark

python

from pyspark.sql import SparkSession

spark = SparkSession.builder.appName("DataAnalytics").getOrCreate()Read the Data

python

df = spark.read.option("header","true") \

.option("inferSchema","true") \

.csv("/mnt/raw/sales_data.csv")

df.show()Clean the Data

Remove rows where the amount is missing.

python

from pyspark.sql.functions import col

clean_df = df.filter(col("amount").isNotNull())Get Total Sales by Region

python

from pyspark.sql.functions import sum

result = clean_df.groupBy("region") \

.agg(sum("amount").alias("total_sales"))

result.show()Save Clean Data

python

result.write.mode("overwrite") \

.parquet("/mnt/processed/region_sales")This data is now ready for Power BI.

Step 4: Automate Azure Serverless Analytics with Azure Functions

Now comes the smart part.

Azure Function:

Runs this Databricks job automatically

Can run daily or when new data arrives

This removes manual work completely.

Step 5: Create a Power BI Dashboard

Connect Power BI to processed data.

Create: Charts KPIs Reports

Now, business users can see insights instantly.

Real‑Life Example

Imagine this scenario:

Sales data comes every night

The system runs automatically

Dashboard updates by morning

No manual effort. No waiting. This is how companies work.

What You Actually Learn from This

By learning this stack, you gain:

Python for data PySpark & Big Data Azure Cloud Serverless workflows Power BI dashboards Production‑level thinking

This is exactly what ConsoleFlare focuses on.

Career Roles This Prepares You For

After understanding this properly, you are ready for:

Data Analyst

Big Data Analyst

Data Engineer

Power BI Developer

Cloud Data Engineer

These roles are high‑demand and high‑paying.

At ConsoleFlare, we believe:

Anyone can learn data skills - if taught step by step.Related post:

1. Best Practices for Data Partitioning and Optimization in Big Data Systems 2. Architecting Robust ETL Workflows Using PySpark in Azure

Conclusion:

Working with data in real-world settings is made easier by deploying a serverless data analytics stack with Azure Functions and Databricks. Without worrying about infrastructure management, it enables data professionals to create scalable analytics pipelines, automate data processing, and provide trustworthy insights. At ConsoleFlare, we assist professionals and students in gaining practical experience with serverless analytics, Azure cloud services, Databricks, and contemporary data engineering tools. Learners gain confidence in their ability to process large amounts of data, design automated workflows, and develop analytics solutions that address actual business issues through hands-on, industry-level projects. The courses offered by ConsoleFlare help you advance your knowledge of data analytics, data engineering, and cloud technologies from fundamental ideas to implementation that is ready for production.

Follow us for more learning resources and practical insights into data analytics and cloud engineering. Stay connected with ConsoleFlare on LinkedIn and Facebook.